For people with severe mobility impairments — those who cannot use their hands to operate a traditional joystick — powered wheelchairs become inaccessible. Standard wheelchair controls assume a level of manual dexterity that many users simply don’t have due to conditions like spinal cord injuries, muscular dystrophy, or cerebral palsy.

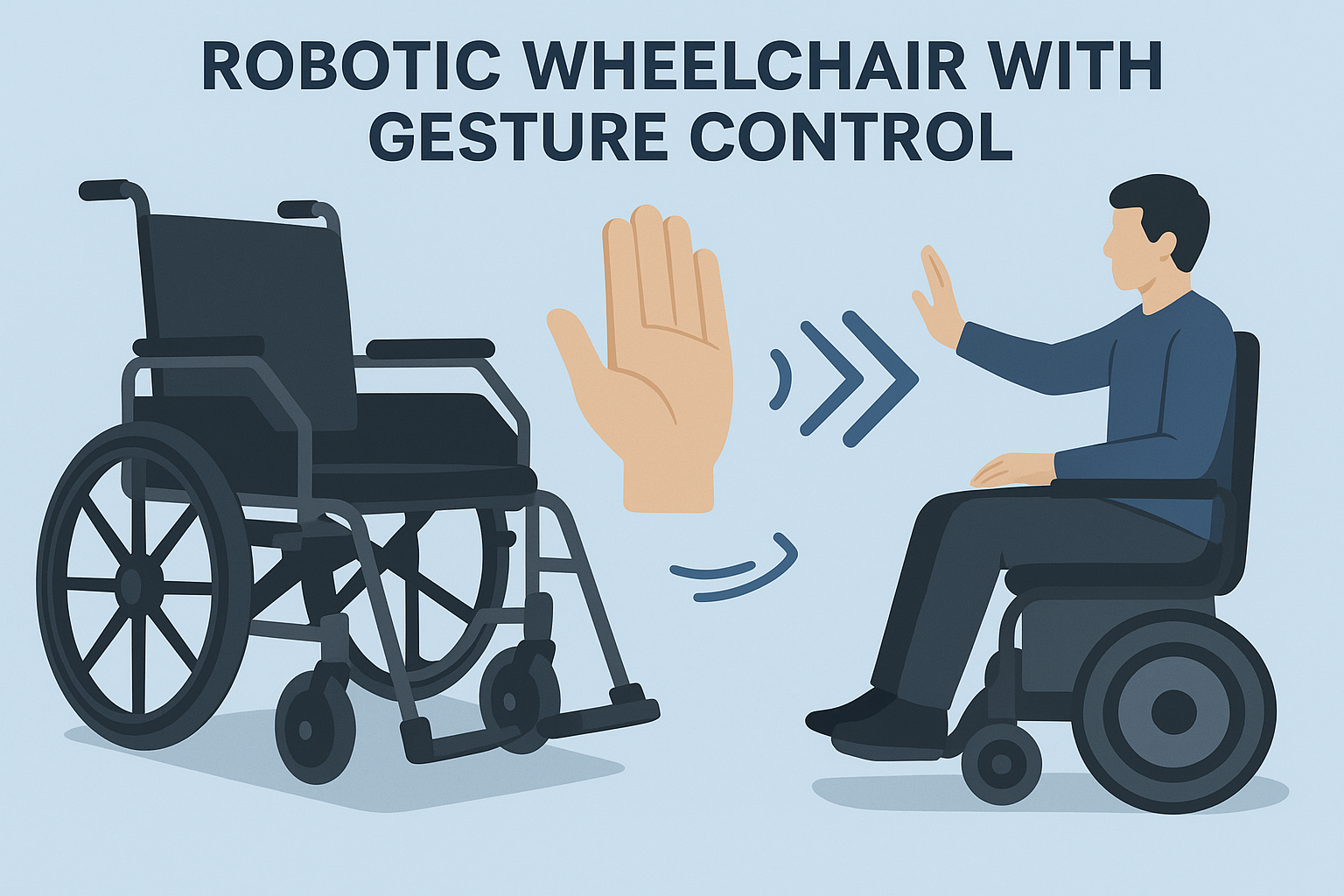

We set out to change that. The result is a robotic wheelchair that responds to natural hand and head gestures, giving users intuitive, hands-free control over their mobility. Here’s how we built it.

The Challenge

Traditional powered wheelchairs rely on a joystick controller. While effective for many users, this interface excludes individuals who lack fine motor control in their hands. Existing alternatives — such as sip-and-puff systems or chin controls — tend to be expensive, have steep learning curves, and offer limited directional control.

We wanted to build something different: a wheelchair that understands natural gestures and translates them into smooth, safe movement. The goals were clear:

- Intuitive control — users should be able to direct the wheelchair with natural movements they can already perform

- Safety first — the system must include obstacle detection and emergency stop capabilities

- Affordability — the solution should be accessible to more people, not just those who can afford premium assistive technology

- Adaptability — different users have different abilities, so the control system must be customisable

Our Approach

The project combined expertise in robotics, computer vision, embedded systems, and human-centred design. We followed our standard four-stage development process: Discovery, Innovation, Development, and Optimisation.

Gesture Recognition System

The core innovation is the gesture recognition system. We evaluated multiple approaches — from electromyography (EMG) sensors to infrared tracking — and ultimately chose a computer vision approach using a compact camera module paired with a trained machine learning model.

The camera captures the user’s hand and head movements in real time. An onboard processor runs a gesture classification model that identifies predefined commands:

- Forward motion — open palm pushed forward

- Stop — closed fist

- Turn left — hand tilted left

- Turn right — hand tilted right

- Reverse — palm pulled back

- Speed control — gesture intensity maps to speed

The model was trained on thousands of gesture samples across different lighting conditions, skin tones, and movement ranges to ensure reliable operation in real-world environments.

For users who have limited hand mobility but can move their head, we implemented an alternative head-tracking mode that maps head tilt directions to wheelchair movement commands.

Hardware Architecture

The wheelchair’s electronic system is built around a central microcontroller that coordinates all subsystems:

Processing unit: A dedicated embedded processor handles the computationally intensive gesture recognition in real time, running the inference model at 30 frames per second to ensure responsive control.

Motor control: Dual brushless DC motors provide smooth, variable-speed drive through custom motor driver circuits. We designed the motor control system to produce gentle acceleration and deceleration curves — sudden movements in a wheelchair are both uncomfortable and dangerous.

Sensor array: Ultrasonic and infrared proximity sensors mounted around the wheelchair detect obstacles in all directions. When an object is detected within a configurable safety radius, the system automatically reduces speed and prevents the wheelchair from colliding.

Power management: A rechargeable lithium-ion battery system provides 8-10 hours of continuous operation on a single charge. The power management circuit monitors battery health and provides low-battery warnings through both audio and visual alerts.

Communication module: Bluetooth connectivity allows a companion mobile app to configure gesture sensitivity, speed limits, and control preferences for individual users.

Safety Systems

Safety was engineered into every layer of the system:

Obstacle avoidance — the proximity sensor array creates a safety bubble around the wheelchair. Objects detected within the bubble trigger automatic speed reduction, and objects within the collision zone trigger a full stop.

Emergency stop — a physical emergency stop button is always accessible. Additionally, a specific gesture (closed fist held for 2 seconds) triggers an immediate stop regardless of other inputs.

Fail-safe design — if the gesture recognition system loses tracking (due to lighting changes or camera obstruction), the wheelchair defaults to a stopped state rather than continuing its last command. The system requires continuous positive input to maintain movement.

Speed limiting — maximum speed can be configured per user and per environment. Indoor mode limits speed for tight spaces, while outdoor mode allows higher speeds on open paths.

Software Integration

The companion mobile app serves three purposes:

Setup and calibration — when a new user begins using the wheelchair, the app walks them through a calibration process that adapts the gesture recognition to their specific movement range and style.

Real-time monitoring — caregivers or family members can see the wheelchair’s battery status, location, and usage statistics through the app.

Customisation — speed limits, sensitivity levels, gesture mappings, and safety thresholds can all be adjusted through the app without any physical modifications to the hardware.

Results and Impact

The working prototype demonstrated several key achievements:

Gesture recognition accuracy: 94% recognition rate across all tested conditions, with less than 1% false positive rate for movement commands.

Response latency: Less than 200 milliseconds from gesture detection to motor response — fast enough to feel natural and responsive to the user.

Battery life: 8+ hours of continuous operation, sufficient for a full day of use.

User feedback: During user testing, participants reported that the gesture control felt intuitive after approximately 15 minutes of practice — significantly shorter than the learning curve for sip-and-puff alternatives.

What This Project Demonstrates

This wheelchair project showcases what’s possible when robotics, AI, and human-centred design come together with a clear purpose. It also illustrates several broader principles that apply to any custom hardware project:

Start with the user, not the technology. We didn’t begin by choosing sensors and processors — we began by understanding the users’ abilities and limitations. The technology choices followed from the human requirements.

Prototype early and test often. By building working prototypes early in the process, we could test with real users and iterate on the design before committing to final manufacturing decisions.

Safety is not optional. In any system that directly affects human safety — whether it’s a wheelchair, a medical device, or an industrial robot — safety systems must be designed in from the beginning, not bolted on at the end.

Software amplifies hardware. The physical wheelchair is important, but it’s the software — the gesture model, the mobile app, the safety algorithms — that makes it truly intelligent and adaptable.

Custom Robotics and Assistive Technology

At Wilnet Solutions, assistive technology is one of the areas where we believe custom hardware development has the most meaningful impact. The intersection of robotics, AI, and accessibility creates opportunities to significantly improve people’s quality of life.

Our robotics capabilities extend beyond wheelchairs to include collaborative robots, robotic arms for industrial applications, and STEM education robots that teach the next generation of engineers. Every project starts with a clear understanding of the problem and ends with a purpose-built solution.

Have an idea for a robotics or assistive technology project? Let’s talk about bringing it to life →

Wilnet Solutions designs and builds custom robotics, IoT devices, and embedded systems in Australia. See more of our custom hardware work →